Epoch 9️⃣ : This week in ML (+ Bioinformatics 🧬 and Astronomy 🌌)

Linear Transformers, M6, VGNN, NeRD, ...

Imanol Schlag, Kazuki Irie, and Jürgen Schmidhuber show the formal equivalence of linearised self-attention mechanisms and fast weight memories from the early '90s. From this observation, they infer a memory capacity limitation of recent linearised softmax attention variants. With finite memory, a desirable behavior of fast-weight memory models is to manipulate the contents of memory and dynamically interact with it. Inspired by previous work on fast weights, they propose to replace the update rule with an alternative rule yielding such behavior. They also propose a new kernel function to linearise attention, balancing simplicity and effectiveness. Link to the code., Yannic Kilcher’s Video.

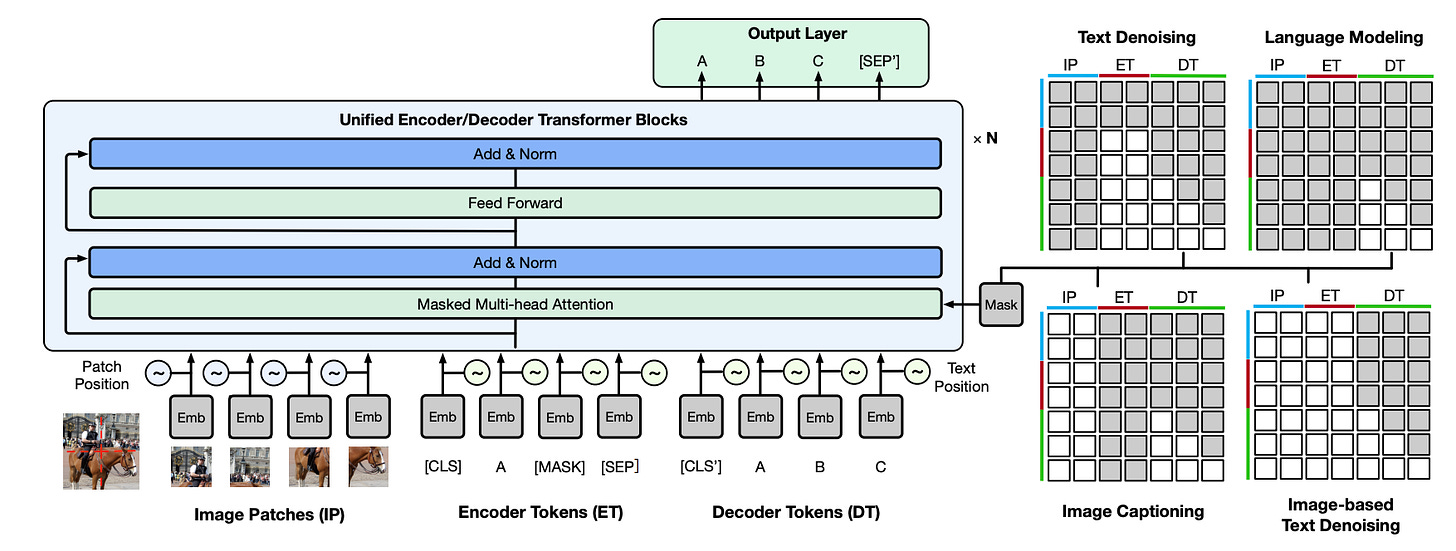

Abstract: In this work, the authors construct the largest dataset for multimodal pretraining in Chinese, which consists of over 1.9TB images and 292GB texts that cover a wide range of domains. They propose a cross-modal pretraining method called M6, referring to Multi-Modality to Multi-Modality Multitask Mega-transformer, for unified pretraining on the data of single modality and multiple modalities. They scale the model size up to 10 billion and 100 billion parameters and build the largest pretrained model in Chinese. They apply the model to a series of downstream applications and demonstrate its outstanding performance in comparison with strong baselines. Furthermore, they specifically design a downstream task of text-guided image generation and show that the finetuned M6 can create high-quality images with high resolution and abundant details.

ML + Bioinformatics 🧬

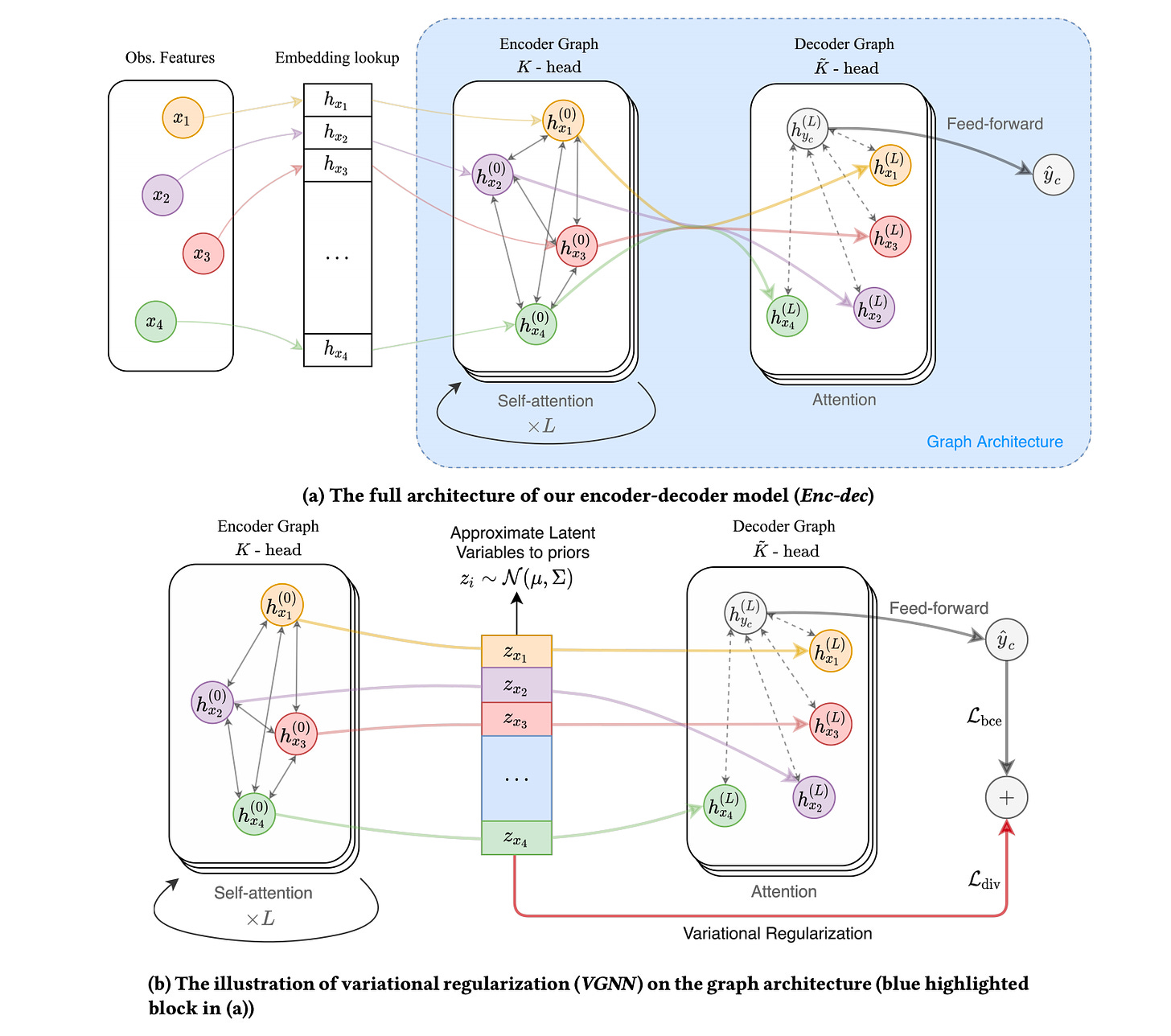

Variationally Regularized Graph-based Representation Learning for Electronic Health Records

Electronic Health Records (EHR) are high-dimensional data with implicit connections among thousands of medical concepts. A feasible approach to improving the representation learning of EHR data is to associate relevant medical concepts and utilize these connections. However, there are problems to be addressed on how to learn the medical graph adaptively and how to understand the effect of a medical graph on representation learning. In this paper, Weicheng Zhu and Narges Razavian propose a variationally regularized encoder-decoder graph network that achieves more robustness in graph structure learning by regularizing node representations. Besides the improvements in empirical experiment performances, they provide an interpretation of the effect of variational regularization compared to standard graph neural networks, using singular value analysis. Link to the code.

NeRD: Neural Representation of Distribution for Medical Image Segmentation

Hang Zhang et al. introduce Neural Representation of Distribution (NeRD) technique, a module for convolutional neural networks (CNNs) that can estimate the feature distribution by optimizing an underlying function mapping image coordinates to the feature distribution. Using NeRD, the authors propose an end-to-end deep learning model for medical image segmentation that can compensate the negative impact of feature distribution shifting issue caused by commonly used network operations such as padding and pooling. An implicit function is used to represent the parameter space of the feature distribution by querying the image coordinate. With NeRD, the impact of issues such as over-segmenting and missing have been reduced, and experimental results on the challenging white matter lesion segmentation and left atrial segmentation verify the effectiveness of the proposed method.

Astroinformatics 🌌

On the accuracy and precision of correlation functions and field-level inference in cosmology

Florent Leclerc and Alan Heavens present a comparative study of the accuracy and precision of correlation function methods and full-field inference in cosmological data analysis. To do so, they examined a Bayesian hierarchical model that predicts log-normal fields and their two-point correlation function. Although a simplified analytic model, the log-normal model produces fields that share many of the essential characteristics of the present-day non-Gaussian cosmological density fields. They find that:-

(a) standard assumptions made to write down a likelihood for correlation functions can cause significant biases, a problem that is alleviated with simulation-based inference;

(b) analyzing the entire field offers considerable advantages over correlation functions, through higher accuracy, higher precision, or both.

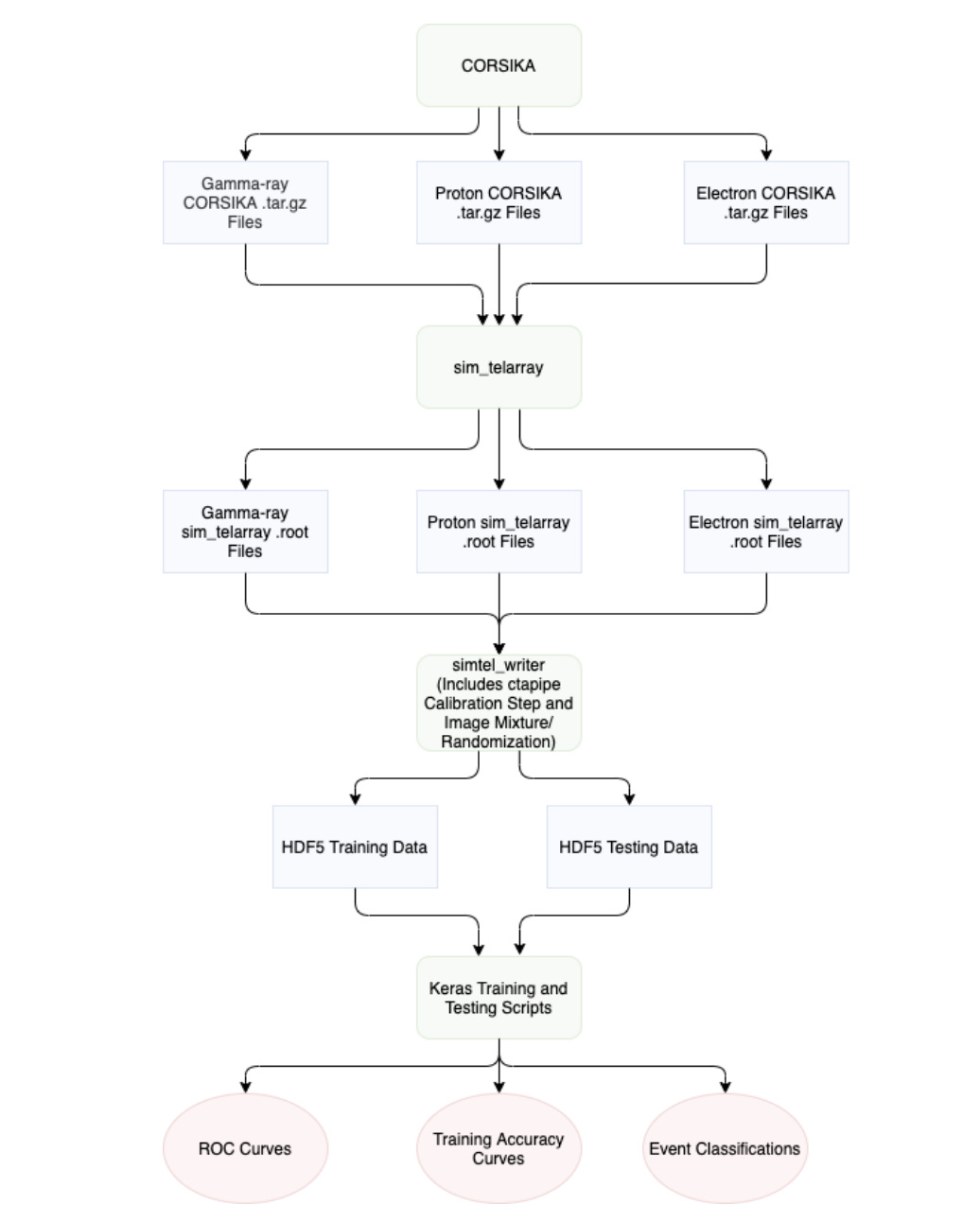

The gains depend on the degree of non-Gaussianity, but in all cases, including weak non-Gaussianity, the advantage of analyzing the full field is substantial. Link to the code.New deep learning techniques present promising new analysis methods for Imaging Atmospheric Cherenkov Telescopes (IACTs) such as the upcoming Cherenkov Telescope Array (CTA). In particular, the use of Convolutional Neural Networks (CNNs) could provide a direct event classification method that uses the entire information contained within the Cherenkov shower image, bypassing the need to Hillas parameterize the image and allowing fast processing of the data.

In this test-of-concept simulation study, Samuel Spencer et al. investigate the potential for using these camera pixel waveforms with new deep learning techniques as a background rejection method, against both proton and electron-induced EAS. They find that a means of utilizing their information is to create a set of seven additional 2-dimensional pixel maps of waveform parameters, to be fed into the machine learning algorithm along with the integrated charge image. Link to the code.

Explainable, Interpretable, Bias and Ethics in AI

AI Ethics Brief #46: State of AI Ethics Panel, Cold Hard Data, Responsible AI in India, and more ...

Interesting Events and News

Articles and Resources 📃 I liked

NFTs Explained: What They Are and Why They’re Selling for Millions of Dollars by Luke Heemsbergen

The Easiest Unsolved Problem in Graph Theory by Sergei Ivanov

Must-read papers on graph neural networks (GNN) by Natural Language Processing Lab at Tsinghua University

⭐️⭐️ Testing Machine Learning Systems: Code, Data and Models by MadewithML

Recommended Podcasts 🎧

DNA Today: A Genetics 🧬 Podcast | #142 Barbara Fortini on Genomic Data Analytics

Lex Fridman Podcast | #166 – Cal Newport: Deep Work, Focus, Productivity, Email, and Social Media

The Stack Overflow Podcast | Chatting with Google’s DeepMind about the future of AI

DataTalksClub | Continuous Integration for Machine Learning - Elle O'Brien