Epoch 2️⃣3️⃣: This week in ML (+ Bioinformatics 🧬 and Astronomy 🌌)

How to Train ViT, Sparsity Workshop, GNN for Mental Illness, DeepDDS and more ...

Vision Transformers (ViT) have proved to be feasible for computer vision and have attained good performance on tasks such as image classification and semantic image segmentation. However, results on smaller training datasets have shown that the weaker inductive bias of these models (compared to CNNs) cause them to be more reliant on data augmentation or model regularization ("AugReg" for short). Steiner et al. (2021) recently proposed a systematic empirical study to better understand the interplay between amount of training data, AugReg, model size, and compute budget. Link to the code.

Research interest in sparsity in deep learning have exploded in recent years from both the academic and the industry, and the organizers believe the community is now large and diverse enough to join together to discuss shared research priorities and cross-cutting issues. Currently, the communities working on aspects of sparsity and related problems are disparate, oftentimes presenting at separate venues for separate audiences. This workshop aims to bring together researchers working on problems related to the practical, theoretical, and scientific aspects of neural network sparsity, and members of adjacent communities, in order to build connections across different areas, create opportunities for new collaborations, and articulate shared challenges.

ML in Bioinformatics 🧬

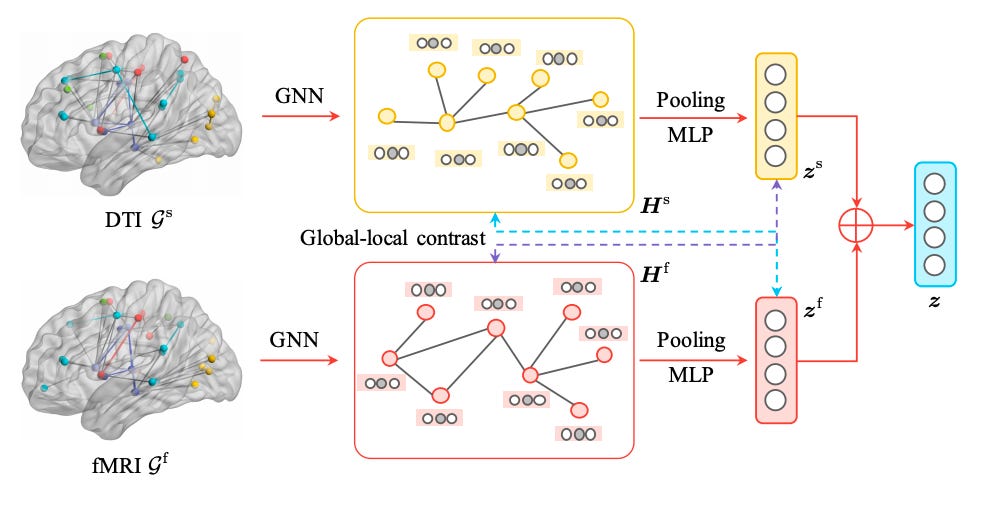

Multimodal brain networks characterize complex connectivities among different brain regions from both structural and functional aspects and provide a new means for mental disease analysis. Recently, Graph Neural Networks (GNNs) have become a de facto model for analyzing graph-structured data. However, how to employ GNNs to extract effective representations from brain networks in multiple modalities remains rarely explored. Moreover, as brain networks provide no initial node features, how to design informative node attributes and leverage edge weights for GNNs to learn is left unsolved. To this end, the authors develop a novel multiview GNN for multimodal brain networks. In particular, they regard each modality as a view for brain networks and employ contrastive learning for multimodal fusion. Then, they propose a GNN model which takes advantage of the message passing scheme by propagating messages based on degree statistics and brain region connectivities.

DeepDDS: deep graph neural network with attention mechanism to predict synergistic drug combinations

In this paper, the authors proposed a deep learning model based on graph neural networks and attention mechanism to identify drug combinations that can effectively inhibit the viability of specific cancer cells. The feature embeddings of drug molecule structure and gene expression profiles were taken as input to multi-layer feedforward neural network to identify the synergistic drug combinations. Furthermore, they explored the interpretability of the graph attention network, and found the correlation matrix of atomic features revealed important chemical substructures of drugs. Link to the code.

Astroinformatics 🌌

Radio Galaxy Zoo: ClaRAN - A Deep Learning Classifier for Radio Morphologies

The upcoming next-generation large area radio continuum surveys can expect tens of millions of radio sources, rendering the traditional method for radio morphology classification through visual inspection unfeasible. Thus, the authors present ClaRAN - Classifying Radio sources Automatically with Neural networks - a proof-of-concept radio source morphology classifier based upon the Faster Region-based Convolutional Neutral Networks (Faster R-CNN) method. Specifically, they train and test ClaRAN on the FIRST and WISE images from the Radio Galaxy Zoo Data Release 1 catalogue. ClaRAN provides end users with automated identification of radio source morphology classifications from a simple input of a radio image and a counterpart infrared image of the same region. ClaRAN is the first open-source, end-to-end radio source morphology classifier that is capable of locating and associating discrete and extended components of radio sources in a fast (< 200 milliseconds per image) and accurate (>= 90 %) fashion. Link to the code.

Towards Machine Learning-Based Meta-Studies: Applications to Cosmological Parameters

The authors develop a new model for automatic extraction of reported measurement values from the astrophysical literature, utilising modern Natural Language Processing techniques. They use this model to extract measurements present in the abstracts of the approximately 248,000 astrophysics articles from the arXiv repository, yielding a database containing over 231,000 astrophysical numerical measurements. Furthermore, they present an online interface (Numerical Atlas) to allow users to query and explore this database, based on parameter names and symbolic representations, and download the resulting datasets for their own research uses. To illustrate potential use cases they then collect values for nine different cosmological parameters using this tool.

Explainable, Interpretable, Bias and Ethics in AI

Interesting Events and News 📰

Someone Leaked The Next IPCC Report. Here's How Experts Are Reacting

Gigantic Antarctic Lake Suddenly Disappears in Monumental Vanishing Act

Life on Venus Would Have to Be a 'New Type of Organism', Astronomers Now Claim

A Major Prediction Stephen Hawking Made About Black Holes Has Finally Been Observed

XAI: Learning Fairness with Interpretable Machine | July 9 IST

Articles and Resources 📃 I liked

Recommended Podcasts 🎧

Gradient Dissent | How Pandora deploys machine learning models into production with Amelia and Filip

Gradient Dissent | The rise of big data and responding to COVID-19 with Roger and DJ

TWIML AI Podcast | The Future of Human-Machine Interaction with Dan Bohus and Siddhartha Sen - #499

Robot Brains Podcast | Anca Dragan on why Asimov's three laws of robotics need updating

Talk Python To Me | #323: Best practices for Docker in production